I discover the paper in 2026 and I sent my feedback to Nancy, which is the first version of this document. She got back to me the next day with her feedback to my feedback, which I integrated into this final version.

Wednesday, April 22, 2026

Our feedback on --> Cautious hope: Prospects and perils of communitarian governance in a Web3 environment

I discover the paper in 2026 and I sent my feedback to Nancy, which is the first version of this document. She got back to me the next day with her feedback to my feedback, which I integrated into this final version.

Friday, March 20, 2026

Nondominium - A Coordination Layer for Parallel Infrastructure

Executive Summary

This is not a small market problem. Global supply chains alone account for over $10 trillion in annual intermediate goods trade, while infrastructure investment requirements exceed $3.3 trillion annually and rise toward $7 trillion when climate-adjusted needs are included (McKinsey, 2020; Woetzel et al., 2016; OECD, 2017). Yet recent evidence suggests that the binding constraint is often not capital itself, but coordination: the ability to govern interdependent assets, actors, and processes across fragmented institutional boundaries (World Bank, 2020).

Tuesday, November 4, 2025

From Digital Commons to Coordinated Commons: How Web3 Solves Open Source Fragmentation

The open-source movement is founded on the principle of sharing. Developers are granted the freedom to view, use, modify, and distribute code, a pool of shared knowledge known as digital commons. This freedom, however, carries an inherent paradox: the ease of copying code often leads to unwanted proliferation or project fragmentation, draining resources and creating chaos for users.

This post explores the governance, social, and economic failures inherent in traditional open-source development and presents a new paradigm: using Web3 primitives like blockchain, NFTs, and DAOs to enforce coordination, accountability, and sustainable development.Wednesday, October 15, 2025

Complexity Rising: Are We Living Through the Collapse of Hierarchy?

Collapse rarely looks like fire and ruins. Most often, it feels like drift, a slow unraveling of institutions, the loss of confidence in systems that once seemed unshakable, a spreading sense that no one is in control anymore. The question that haunts our time is not whether society will collapse, but whether it already is.

Over the last few decades, researchers across economics, ecology, and complexity science have been circling around the same idea: our world is entering a transition phase. The mechanisms that once allowed civilization to grow and adapt are breaking down. And yet, from the midst of that breakdown, a new kind of order is trying to emerge.

Tuesday, October 7, 2025

Epistemology of Organizations in an Age of Complexity: Firms, Open Networks, and AI

As the global economy grows more complex, dynamic, and uncertain, the epistemic fitness of organizational forms—how they sense, validate, decide, and learn—becomes central to innovation capacity and long-term performance. This essay outlines a research program to develop a comparative framework for the epistemology of organizations. It contrasts centralized firms (pre- and post-digital) with open networks (peer-to-peer) and explores the transformative potential of hybrid AI–P2P epistemic models. Grounded in established literature from Coase and Williamson to Benkler and modern complexity theorists, this work situates its urgency in the context of rising systemic turbulence. The central argument is that in an era defined by uncertainty and complexity, the competitive advantage shifts from efficiencies of scale to efficiencies of learning, anticipation and coherent joint action, making organizational epistemology the critical determinant of future economic dominance.

Monday, September 22, 2025

Beyond Open Access: How Peer-to-Peer Principles are Building the Next Generation of Scientific Tools

The term "Open Science" is rapidly gaining traction in academic circles. For many, it signifies a move towards greater transparency and accessibility, primarily through open-access publications and the sharing of research data. This is a crucial and welcome development, breaking down paywalls and fostering a more collaborative scientific discourse. But what if this is just the tip of the iceberg? The principles underpinning open science are part of a much deeper societal transformation, one that is not only changing how we share knowledge but how we create, innovate, and produce?

This shift extends far beyond the university walls. We see it in the digital infrastructure that powers our world, with open-source software like Linux running the vast majority of web servers. We see it in our quest for knowledge, where collaborative endeavors like Wikipedia have built a comprehensive encyclopedia, contributed to by a global network of volunteers. We see it in media, finance, and manufacturing, where decentralized and collaborative models are challenging traditional, top-down institutions.

Thursday, September 11, 2025

Composting Capitalism: How Peer Production Reduces Waste and Builds a Regenerative Economy

But ecosystems thrive precisely because death is followed by composting. Fallen matter is recycled into fertile ground for new growth. In this essay, we argue that commons-based peer production (CBPP)—the collaborative creation of digital and material goods outside market logics—functions more like an ecosystem’s forest floor than capitalism’s landfill. By reducing resource misallocation, improving recycling of knowledge and materials, and minimizing losses from organizational “death,” CBPP offers a regenerative economic logic better suited to a resource-constrained planet.

Wednesday, September 3, 2025

The Missing Bridge: Why Complexity Economics and Peer-to-Peer Must Converge

There is a puzzle at the heart of our economic future. On one side stands the Santa Fe Institute (SFI) and its decades-long work on complexity economics — models of self-organization, emergent order, adaptive systems, and networked coordination. On the other side stand the theorists and practitioners of the peer-to-peer (P2P) economy — commons-based peer production, open source software, blockchain, DAOs, and open value networks.

Both are deeply concerned with the same reality: economies as complex adaptive systems. And yet, strangely, these two intellectual worlds rarely acknowledge one another. Even stranger, some within each camp view the other with skepticism or even disdain.

But this gap is a problem. It must be bridged. Because P2P is no longer a fringe utopia — it is an established, indispensable mode of production. And complexity economics is no longer a fringe critique of neoclassical orthodoxy — it is a robust and maturing science. Together, they could form the intellectual foundation for a new economic paradigm.

Tuesday, September 2, 2025

Are We Living Through Collapse? Complexity, Digital Technology, and the Future Beyond Capitalism

The word “collapse” usually conjures images of sudden catastrophe: cities abandoned, empires falling overnight, institutions crumbling in chaos. But collapse can also look much slower — a gradual unraveling where the signs are everywhere but hard to pin to a single moment. More and more scholars are beginning to argue that this is where we are today: global society is in the midst of collapse.

This doesn’t mean the world will end tomorrow. It means that the institutions and economic logics that sustained industrial modernity — capitalism, liberal democracy, and even state socialism — are increasingly unable to cope with the world they have helped create.

The Case for Collapse

The idea that we are living through collapse is not new, but it has gained momentum. In The Epochal Crisis of Global Capitalism (2024), William Robinson describes a multidimensional breakdown: economic stagnation, political disillusionment, deepening inequality, ecological tipping points, and rising geopolitical conflict. For Robinson, this is not just another downturn — it’s an epochal crisis, one that capitalism cannot resolve within its own logic.

Friday, July 25, 2025

Beyond the Ledger: Why Blockchain and DAOs Fall Short for Complex Economic Organizations, and How OVNs Point the Way Forward

The Global Economic Transition

We are witnessing a profound transformation in the architecture of global economic activity. The traditional capitalist system, rooted in firm hierarchies, proprietary assets, and market-based transactions, is giving way to a networked economy. This emergent configuration is typified by commons-based peer production (CBPP), open source collaboration, distributed knowledge networks, and peer-to-peer (p2p) collaboration. Examples abound: permissionless blockchains, distributed scientific research initiatives, decentralized media platforms, and open educational resources.

In parallel, the digital infrastructures enabling these formations are evolving. Initially celebrated as a breakthrough in decentralized coordination, blockchain technologies and Decentralized Autonomous Organizations (DAOs) are revealing inherent limitations when tasked with modeling complex economic processes and sustaining full-fledged economic organizations. In contrast, newer agent-centric approaches, such as the Open Value Network (OVN) model built on Resource-Event-Agent (REA) accounting, the Valueflows vocabulary, hREA logic, and Holochain as a distributed substrate, are showing greater promise.

This blog post draws from the experience of real-world p2p production networks such as Sensorica, and analyzes the foundational limitations of blockchain/DAO-based systems while advocating for a hybrid architecture of economic coordination.

Thursday, May 1, 2025

Integrating Holochain, Mattereum and OVN: A Framework for Peer Production

Sacha Pignot has kindly shared the latest blog from his own Alternef Digital Garden with us at hAppenings.community this week! Sacha introduces one of his visionary projects: a revolutionary convergence of decentralizing technologies; Holochain, hREA, and Mattereum. He walks us through the key elements of his integration, exploring how these complementary tools empower resilient, sustainable economic models that prioritise cooperation and equitable value distribution. Sacha is a full-stack developer with a deep knowledge of decentralizing tech, peer-to-peer networks and a passion for education and travel. Amongst the projects he’s contributing his talents to is hAppenings.community’s Requests & Offers HC/MVP. We’ll be sharing more about our progress in the coming months.

Read more here...

Tuesday, January 25, 2022

How Sensorica OVN handles material resources

Dive into Sensorica's OVN model

Sunday, May 9, 2021

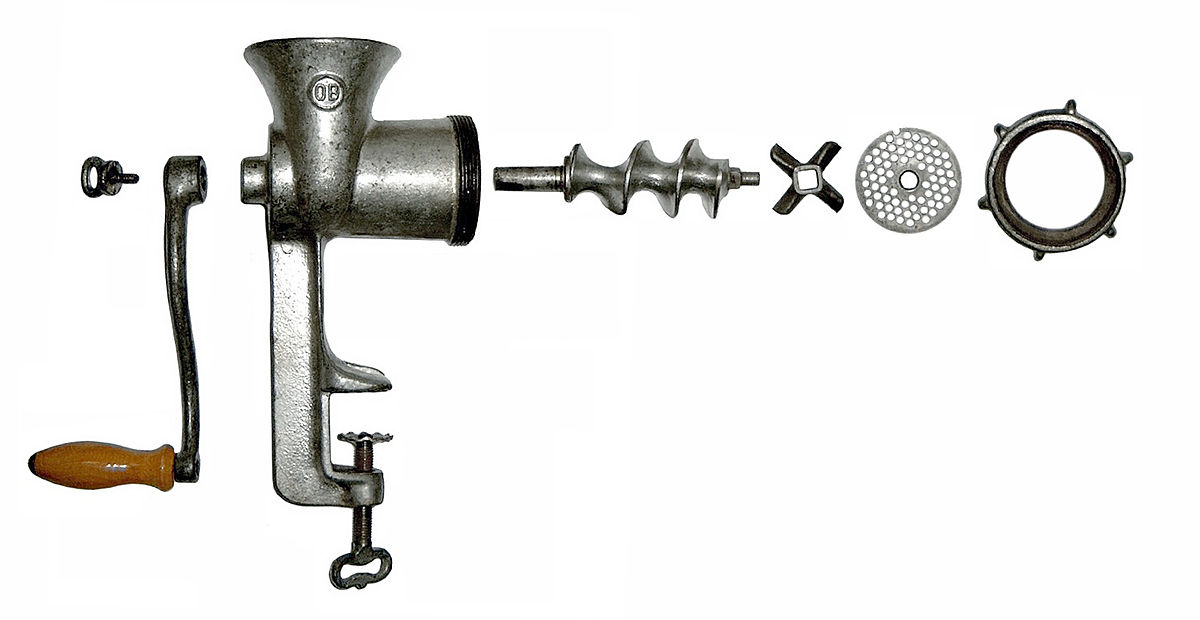

Next generation food machines and how Sensorica approaches food crisis

This is still a draft (third version). Come back for the final version, you'll probably be surprised.

--------------------------------

The irony is that when the proverbial S&!# hits the fan, supply chains are disrupted and some people die of starvation, while being surrounded by an abundance of local food sources. Our current economy is very well organized to maximize wealth generation, which means a balance between fulfilling desires and needs of the population, while making money. But at the same time, our economy is unsustainable and fragile. It relies on a web of centralized systems, designed during the industrial era, which are themselves fragile. If one of them fails, the others follow. For example, during the 2008 financial crisis people still needed to eat and farmers still possessed the land and the machines to produce food, but the global food market was greatly disrupted. In capitalism, the market is supposed to readjust to fulfill a new need, but that cannot happen fast enough when the economic infrastructure is affected by a crisis. In socialism, or planned economies, the Government is not well suited to deal with complex situations. Food supply chains can be disrupted because of a regional armed conflict, oil price fluctuations, financial system collapse, bad weather conditions, pandemic, to name just a few, without digressing into nuclear and asteroid collisions. The best way to deal with these situations is a mix of button-up and top-down approaches within a commons-based peer production framework.

|

| Store in Venezuela, country with the largest oil reserves. |

In normal times, entire populations depended on staples produced by the industrial agriculture. These products are designed to concentrate a lot of nutrients and, in normal times, it makes economic sense to consume them. The paradox is that when access to these products is disrupted, people can starve while being surrounded by an abundance of food. Many indigenous eatable plants grow around us but we have forgotten how to prepare them. I live in Canada and the great majority of food products that are available in groceries are not indigenous. In times of crisis we eat what we find. Are we prepared to process local food sources?

|

| Delicious Romanian nettle soup |

Modern technology allows us to more efficiently extract and concentrate nutrients from indigenous plants that are not part of our diet in normal times, as they compete with modern agriculture products.

If one day nettles are the only remaining thing that we can eat we'll need time to learn how to prepare them. To get the equivalent in protein of a steak, one needs to eat a 25Kg stack of nettles. Obviously no one can have that in a single serving. In times of crisis, knowing how to make nettle soup is not enough. We need to learn how to extract and concentrate the protein, and how to cook a delicious meal much like a filly tofu dish. That requires learning time and specialized tools. If it is not in our culture to eat nettles, in normal times it is hard to convince people to learn how to prepare them or to invest in equipment to process them.

How to tap into the latent food capacity and be always prepared for rough times? The solution proposed by Greens for Good, an open venture nurtures within the Sensorica OVN, is a versatile food processor that in normal times can mill corn, extract oil from seeds, make tofu, make noodles and all sorts of other tings, while also being able to process eatable indigenous plants. On top of guaranteeing food security, this technology also makes food more sustainable by expanding our food sources, and by encouraging consumption of plant-based proteins instead of animal protein. This seams like a very ambitious plan, but we are not in uncharted territory.

In recent years, 3D printing has revolutionized fabrication. A 3D printer is a versatile technology that can come in the form of a desktop machine able to make toys, and can be easily scaled to a larger rig that can build an entire house in a single day. Almost anything that we can imagine, of any shape and practically of any type of material can be 3D printed. Sensoricans' plan for food processing is similar to what what 3D printing has done to fabrication.

3D printing was invented in the 80'. The Fused Deposit Modeling (FDM) patent expired in 2009, and marked the beginning of the 3D revolution, with the open source hardware community swarming this technology. Sensorica's economic model builds on the open source mode of innovation, inheriting the same properties of rapid development and viral dissemination. But it adds an economic layer on top of the innovation model to ensure proper dissemination, cultural appropriation and adoption of the new technology, this increasing its impact.

In September 2022 the network focuses on the prototyping and testing of an open source decanter centrifuge, a critical component of the extruder, responsible for separating fluids and solids according to their density differentiation.

Have an idea about how to improve this post? Please leave comments blow.

Thursday, November 22, 2018

eco2FEST – Week 2: Building Society from the Ground Up

Public Policies

Governance

Habitation

Urban Agriculture and Food Sovereignty

Winluck Wong is a freelance writer helping companies grow their businesses through blogging, web content writing, copywriting, and social media management. He gets excited about an eclectic mix of topics from business strategies and sustainable development to personal finance and life hacks. Follow his cheeky musings on Twitter and imagine how he can fit in your story on his website.

Tuesday, November 13, 2018

eco2FEST - Week 1: Collaboration in Motion

Mobility

eco2FEST was aptly jumpstarted with the first theme, Mobility. Progress has always traveled on the back of our ability to transfer ideas, resources, and people over great distances in the shortest amount of time possible. The need for this ability to be efficient has become more pronounced in the growing urbanization of society.As ecosystems of urban jungles continue to spread, mobility is what will preserve their delicate balance and keep them thriving. Without mobility as the primary consideration in infrastructure design, cities run the risk of a drawn-out but inevitable decay.

It’s a well-recognized issue and many cities throughout the world have ventured ahead with innovative models of mobility. Take Barcelona and the Bicing program, for instance; London and its GATEway project; or Guangzhou’s Bus Rapid Transit system.

And we can do the same here. Montreal is the ideal city for mobility innovation and it’s evident when

the city hosted the Intelligent Transport Systems (ITS) World Congress last year. The world came to us last year and this year, we’re demonstrating through eco2FEST that we’re ready to bring our ingenuity to the world.

The official launch of eco2FEST last week inspired a lot of frank discussions about the future of mobility in Montreal. Mobility entrepreneurs like Eva Coop and OuiHop’ met with local residents as well as citizen groups like Trainsparence. Together, this open forum invited everyone to give their input on major mobility issues such as the “last mile” problem, bike-integration solutions, and even specific issues such as mobility challenges in Verdun.

It is a promising start to eco2FEST. Jean-François Parenteau, the Mayor of Verdun, mentioned at the

eco2FEST press conference last Tuesday that he and his elected officials wanted the borough to shine

when it comes to a collaborative economy. It captured the intent of eco2FEST perfectly. This is our moment to take the lead by getting the private and public sectors together to collaborate on inclusive solutions that improve the day-to-day lives of everyone.

Collaborative Economy

As the conversation moved from Mobility to the second theme, Collaborative Economy, the ideas continued to flow. Although the participants in the workshops and round tables were from different backgrounds, they all found common ground with these two statements:- Access to resources can be very difficult for individuals; collaborative work-spaces can meet this requirement as well as create opportunities and accessibility for entrepreneurs;

- Collaborative economy adds social and economic benefits within society.

Moreover, certain words came up again and again that showed how strongly they resonated with

everyone: inclusion, participate, equity, growth, and empowerment. All these are values that a

collaborative economy strives toward.

It means less constraints from a traditional workplace and less emphasis on profit before all else. It

means more innovation and ecological results driven for and by the community. Above all, it means

genuine collaboration that goes beyond the family unit and reaches every facet of society.

What’s striking about the first week at eco2FEST was how deeply engaged all the participants were in the discussions that took place. Everyone had something of value to contribute and it’s all these ideas in aggregate that will make a difference as we co-build the society of tomorrow.

All this came out of just the first week. Imagine what we can accomplish next. Find out what’s

happening this week at eco2FEST on our schedule and participate!

What really spoke to you during the first week of eco2FEST? What would you like to see done differently in the upcoming workshops and conferences?

---------------------

Winluck Wong is a freelance writer helping companies grow their businesses through blogging, web content writing, copywriting, and social media management. He gets excited about an eclectic mix of topics from business strategies and sustainable development to personal finance and life hacks. Follow his cheeky musings on Twitter and imagine how he can fit in your story on his website.

Wednesday, August 9, 2017

MATRIOSHKA HUB DE MOBILITÉ

Pour une mobilité en réseau,

inclusive et soutenable

|

Un bon exemple de corridor à Montréal est la nouvelle Promenade Fleuve-Montagne, qui crée un lien piéton entre le fleuve Saint-Laurent, au sud, et le Mont Royal, au nord, comme occasion de rencontres, lieu de pause, passages verts.

|

Le Patches / zonage

|

La Matrice

|

Corridors

|

Métapopulation

|

Métacommunauté

|

Percolation

|